A customer asked me to monitor the age of the pattern files of the Symantec Endpoint Protection 11 Client (SEP11) on its server systems.

As I didn’t found an Symantec SEP Management Pack, I decided to create it on my own.

Perhaps someone could make use of it too, I decided to show it step by step.

Lets start

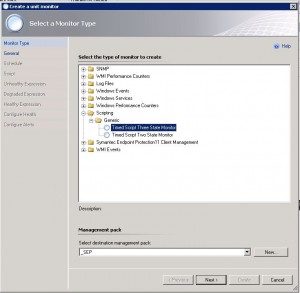

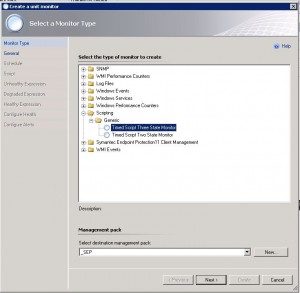

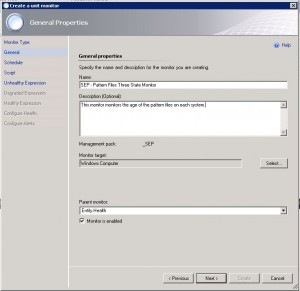

In the Authoring view select Monitor and “Create a Monitor” on the right site.

1. Select the Monitor type to create: “Timed Script Three State Monitor”

2. Change the Management Pack, for example, create a new one called “_SEP”

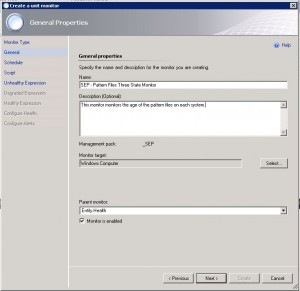

3. Name the Monitor and add a description

4. Select the target for the monitor: (in our case, all computers) Windows Computer

5. Make sure that “Monitor is enabled” is checked

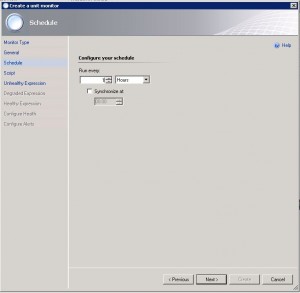

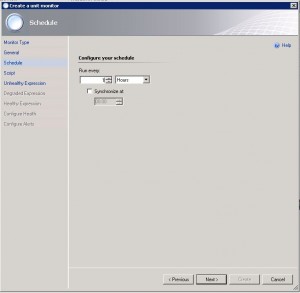

6. Set a value how often the monitor will run and check for the pattern file age

(normally once a day should be enough, but that way it would take also one day to close the alerts automatically if the pattern are updated)

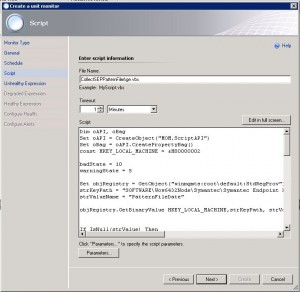

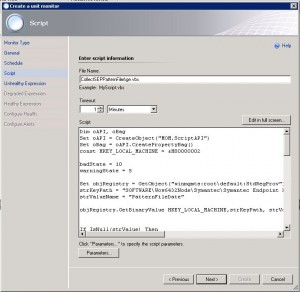

7. Add a script name (make sure that the name of the script is unique to avoid conflicts with other Management Packs)

8. Add the script that collects the pattern age from the registry of the computer system

Dim oAPI, oBag

Set oAPI = CreateObject("MOM.ScriptAPI")

Set oBag = oAPI.CreatePropertyBag()

const HKEY_LOCAL_MACHINE = &H80000002

badState = 10

warningState = 5

Set objRegistry = GetObject("winmgmts:root\default:StdRegProv")

strKeyPath = "SOFTWARE\Wow6432Node\Symantec\Symantec Endpoint Protection\AV"

strValueName = "PatternFileDate"

objRegistry.GetBinaryValue HKEY_LOCAL_MACHINE,strKeyPath, strValueName, strValue

If IsNull(strValue) Then

strKeyPath = "SOFTWARE\Symantec\Symantec Endpoint Protection\AV"

strValueName = "PatternFileDate"

objRegistry.GetBinaryValue HKEY_LOCAL_MACHINE,strKeyPath, strValueName, strValue

End If

If Not IsNull(strValue) Then

y = 1970 + strValue(0)

m = 1 + strValue(1)

d = strValue(2)

date1 = CDate(y & "/" & m & "/" & d)

date2 = now

diffdays = DateDiff("d",date1, date2)

else

diffdays = -1

End If

if diffdays >= badState then

Call oBag.AddValue("state","BAD")

state = "BAD"

else

if diffdays >= warningState then

Call oBag.AddValue("state","WARNING")

state = "WARNING"

else

Call oBag.AddValue("state","GOOD")

state = "GOOD"

end If

end if

Call oAPI.LogScriptEvent("SEPPAtternFileState.vbs", 101, 2, "Patternstatescript delivered state " & state & ". Pattern File age is " & diffdays & " days.")

Call oBag.AddValue("PatternDateTimeToNowDiff",diffdays)

Call oAPI.Return(oBag)

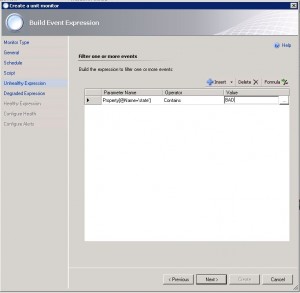

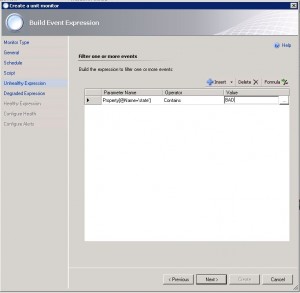

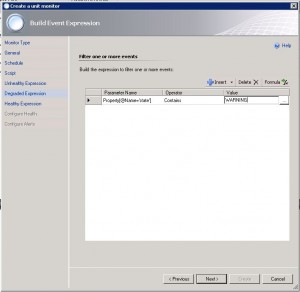

9. Add the BAD state. (If the script returns a BAD)

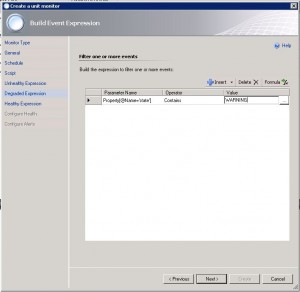

10. Add the WARNING state. (If the script returns WARNING)

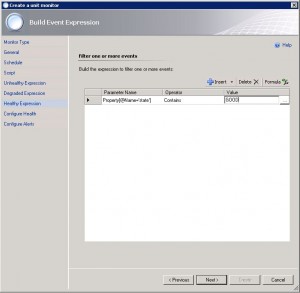

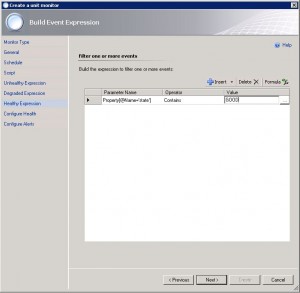

11. Add the GOOD state. (If the script returns GOOD)

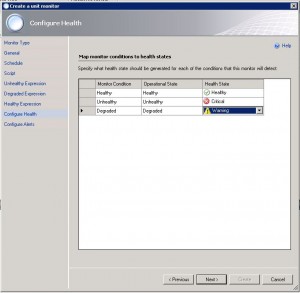

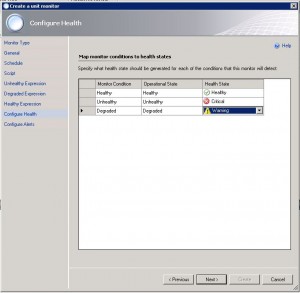

12. Set the monitor state corresponding to the script result.

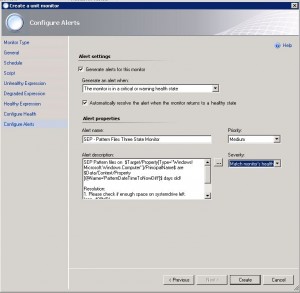

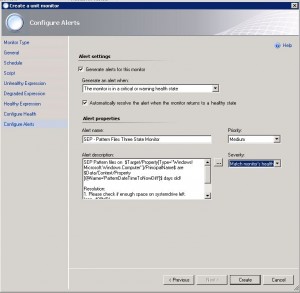

13. Enable the check box for alert generation

14. Change the dropdown “Generate an alert when: The monitor is in a critical or warning health state”

15. Add an alert name (this is what you’ll see when the error is thrown)

16. Change the severity to: “Match monitor’s health”

17. Add an alert text. Mine can be found here (it includes the computer name an the age of the pattern files and a few common resoulution possibilities)

SEP Pattern files on $Target/Property[Type="Windows!Microsoft.Windows.Computer"]/PrincipalName$ are $Data/Context/Property[@Name='PatternDateTimeToNowDiff']$ days old!

Resolution:

1. Please check if enough space on systemdrive left.

(app. 400MB)

2. Check if Live Update Server is reachable

3. Check if SEP Service is running

4. Reinstall SEP Client

Conclusion

Using these steps you can easily add the SEP pattern file age monitor to your SCOM.

Things you can do if you want to make it more professional:

- build an management pack including discovery for computers where SEP is installed

- add parameters for overrides, so warning and error threshold can be overridden without changing the script

(actualy it will warn if pattern are 5 or more days old and error when pattern are 10 or more days old)

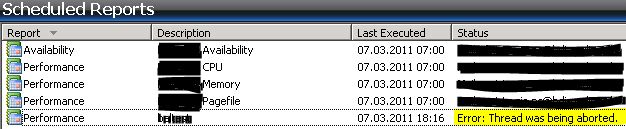

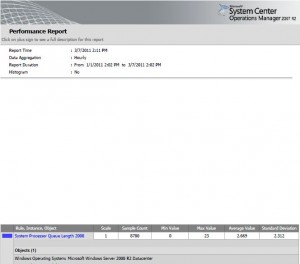

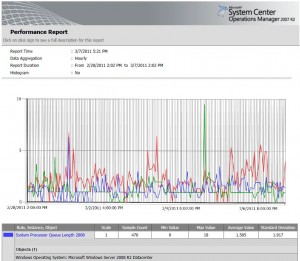

- this script can also be used to build a rule for performance collection

But this way, it is done in round about 5 minutes.

Kind regards,

Benedikt